Enthalpy vs Entropy — Thermodynamic Properties Comparison

Enthalpy and entropy stand as two of the most fundamental, yet often misunderstood, concepts in thermodynamics. They describe different aspects of how systems store energy, distribute energy, and undergo change. Although both belong to the same thermodynamic framework, the ideas they represent are deeply distinct. Enthalpy is primarily associated with heat content and energy transfer during physical and chemical processes, while entropy reflects the degree of dispersal, randomness, or freedom available to the components of a system. By comparing them in a descriptive, conceptual way without relying on formulas, it becomes possible to appreciate the roles they play individually and the ways they work together within the broader landscape of thermodynamic behavior. Exploring their meanings, their physical implications, and their importance in natural phenomena reveals why these two properties form the backbone of energy science and why understanding them provides a deeper insight into the direction, spontaneity, and feasibility of changes occurring throughout the physical world.

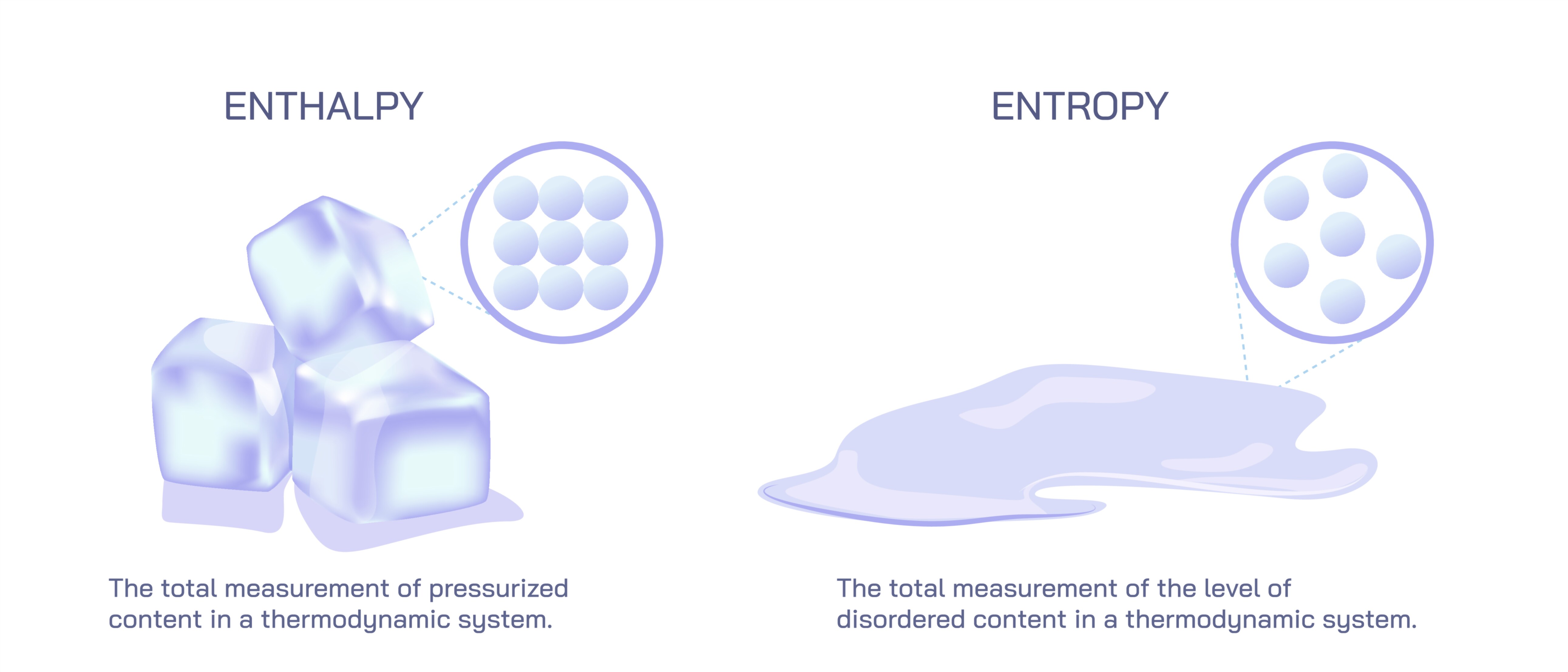

Enthalpy represents a measure of energy associated with the internal structure of a system as well as the work required to create or transform that system under constant pressure. It embodies the idea that substances carry internal heat based on their molecular interactions, bonding arrangements, and phase states. Because most real-world processes—from boiling water to chemical reactions in the atmosphere—occur under conditions close to constant pressure, enthalpy becomes an immensely useful way to track how much heat enters or leaves a system during change. When ice melts, for example, enthalpy explains the absorption of energy necessary to separate water molecules from their rigid crystalline formation. When fuel burns, enthalpy describes the energy released as chemical bonds rearrange. Thus, enthalpy acts as a kind of energetic bookkeeper, accounting for how heat flows during transformations and how energy stored in matter becomes available for work or is absorbed from the surroundings.

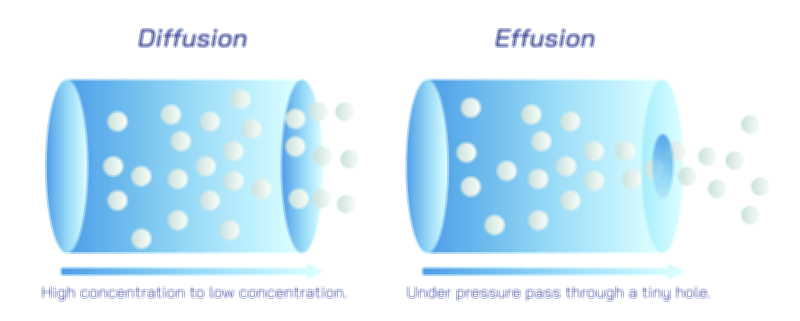

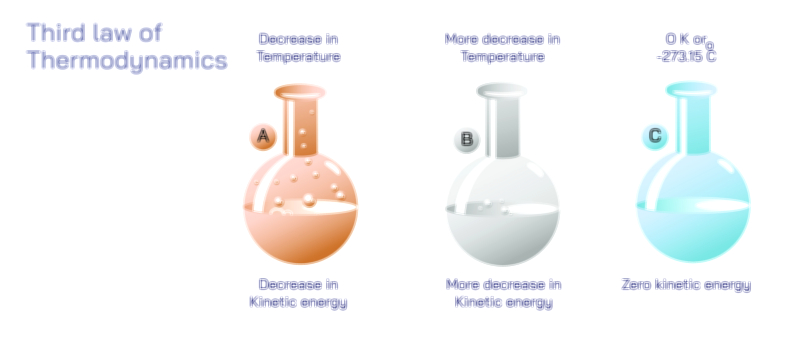

Entropy, in contrast, reflects the underlying tendency of systems to evolve toward states of greater freedom and energy dispersal. While enthalpy speaks of energy quantity, entropy speaks of energy quality—how spread out, accessible, or organized that energy is. Entropy does not simply describe disorder in a casual sense but rather expresses how many possible microscopic arrangements correspond to a macroscopic state. When a solid melts into a liquid or a liquid evaporates into a gas, the particles gain greater freedom of motion, and the number of accessible configurations increases dramatically. This rise in freedom corresponds to an increase in entropy. The spontaneous mixing of gases, the diffusion of perfume through a room, the melting of ice on a warm day, and the equalization of temperature across objects all illustrate how entropy pushes systems toward uniformity and maximum energy dispersal. Entropy, therefore, embodies a powerful directional influence in nature, revealing why certain changes occur without external intervention while others require continuous input of energy.

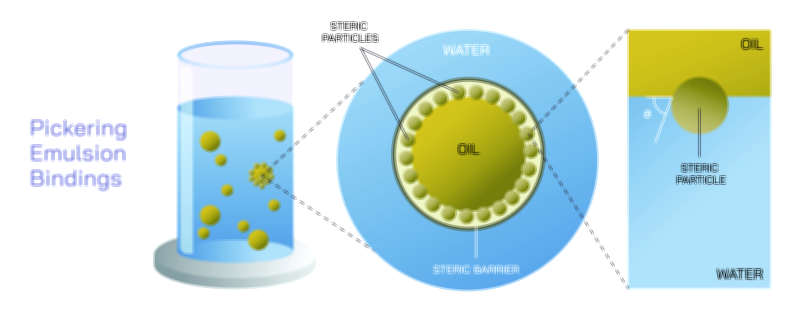

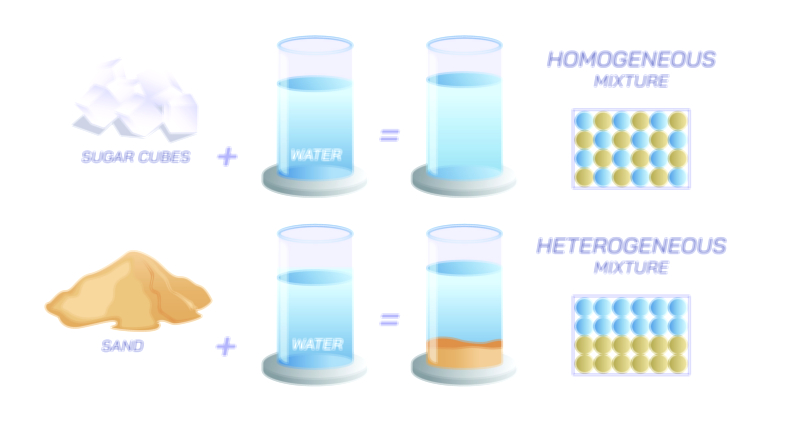

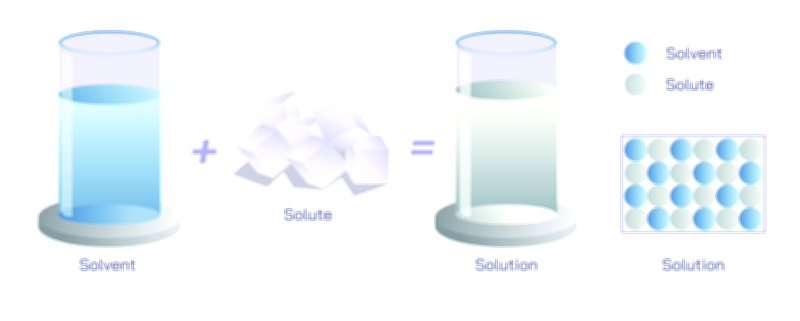

Although enthalpy and entropy describe different aspects of thermodynamic behavior, they often interplay in determining whether a particular process can occur spontaneously. When a chemical reaction or physical change happens, it may require energy to proceed, or it may release energy into the surroundings. The enthalpy component reveals whether the system absorbs heat from the environment or releases it. The entropy component reveals whether the process increases or decreases the overall degree of disorder or energy dispersal. For example, the dissolution of salt in water may involve slight absorption of heat, which might seem unfavorable based solely on enthalpy, yet the dramatic increase in entropy created by dispersing ions throughout the water more than compensates for that energetic cost. As a result, the salt dissolves spontaneously. Conversely, freezing water releases heat, creating a favorable enthalpy change, yet it decreases entropy by locking molecules into a crystalline structure. Freezing therefore becomes spontaneous only under conditions where the surroundings can absorb the released energy and where the temperature supports reduction in entropy.

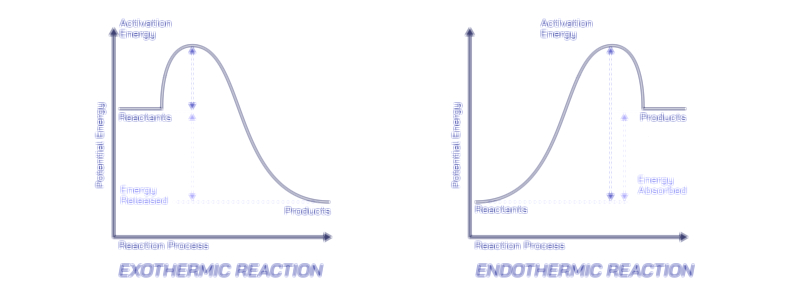

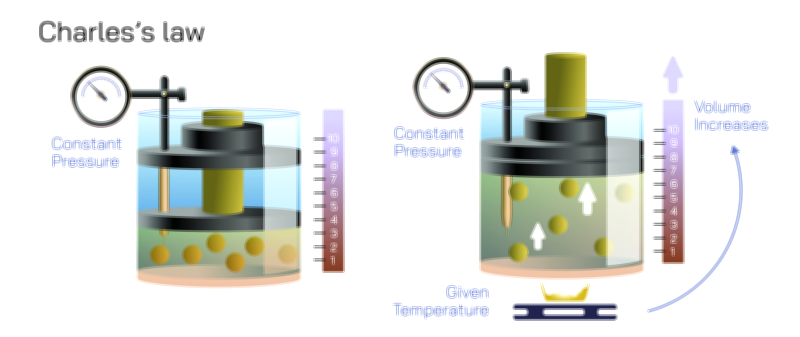

Enthalpy plays a crucial role in how materials respond to heating and cooling. When a substance is heated, energy flows into the system, increasing its enthalpy. The rise in enthalpy can manifest as increased molecular vibrations, breaking of intermolecular bonds, or phase changes. For instance, as liquid water enters the process of boiling, additional energy is required not to increase temperature but to overcome the intermolecular attractions that keep molecules in the liquid state. This energy is stored as enthalpy associated with the phase transition, illustrating that enthalpy changes are essential for understanding latent heat and phase behavior. In chemical reactions, the breaking and forming of bonds determine how much enthalpy changes. Exothermic reactions release energy and decrease enthalpy, while endothermic reactions absorb energy and increase enthalpy. These changes affect temperature control, reaction feasibility, and energy management across various systems, including power generation, industrial synthesis, and biological metabolism.

Entropy influences processes in its own distinct way, often unexpectedly. It drives diffusion, mixing, and natural spreading tendencies that appear effortless but are rooted in deep thermodynamic laws. A drop of ink dispersing in water exemplifies entropy at work: there is no need for stirring or external force because the system moves spontaneously toward a more dispersed state. Entropy also underlies the seemingly irreversible nature of many everyday events. A shattered glass, once broken, does not spontaneously reassemble, not because it violates energy conservation but because the rearrangement into a perfectly intact glass corresponds to an extraordinarily small set of configurations. The overwhelmingly larger number of configurations associated with shattered pieces makes that state vastly more probable, aligning with an increase in entropy. Natural processes tend to favor pathways that result in an expansion of possibilities, expressing entropy’s central role in determining the direction of spontaneous change.

Enthalpy and entropy together provide insight into the behavior of natural systems at multiple scales. In atmospheric science, enthalpy explains how energy absorbed by water vapor fuels the formation of storms, while entropy influences how temperature and pressure gradients drive wind patterns. In geology, enthalpy governs the melting and solidification of rock deep beneath Earth’s surface, while entropy contributes to the mixing and disordering processes that shape mineral composition. In biology, enthalpy explains the energy stored in molecular bonds such as those in glucose, while entropy governs the spontaneous folding of proteins, where the distribution of water molecules and internal flexibility determine the most probable stable configurations.

In engineered systems, enthalpy typically appears when analyzing heat exchange, combustion, refrigeration, or energy conversion. Heat engines, for instance, rely on enthalpy differences between high-temperature and low-temperature reservoirs to produce mechanical work. Cooling systems remove heat from enclosed spaces by managing enthalpy flows through controlled phase changes. Entropy, on the other hand, reveals the unavoidable limitations of such systems. No matter how efficiently a machine is designed, entropy dictates that some energy is always lost to the surroundings as unusable heat. This principle shapes engine performance, sets upper bounds on efficiency, and explains why perpetual motion machines remain impossible.

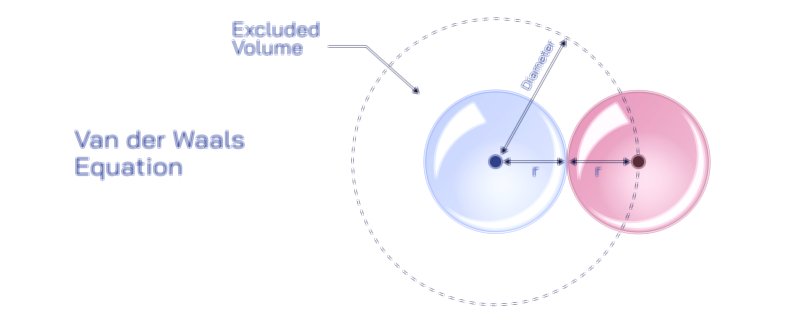

Another key difference between enthalpy and entropy lies in how they respond to system organization. Enthalpy often relates to chemical structure and bonding, meaning it depends strongly on how molecules store energy internally. Entropy, however, reflects the freedom of motion and distribution of energy, making it sensitive to the spacing and arrangement of particles. For example, compressing a gas decreases entropy by forcing particles into a smaller volume, reducing their positional freedom. Heating that same compressed gas, however, increases its entropy again by allowing particles to access more energetic configurations. These opposing influences underscore how enthalpy and entropy often compete or collaborate, depending on the circumstances.

Despite their differences, both enthalpy and entropy are essential in predicting whether a system will undergo change. When a reaction involves a decrease in enthalpy (releasing heat) and an increase in entropy (dispersing energy), it is almost always spontaneous because both factors favor change. When a reaction absorbs heat and reduces entropy, it is rarely spontaneous unless driven by external conditions. Many natural and engineered processes operate somewhere between these extremes, requiring a careful balance of enthalpy-driven and entropy-driven influences to determine their overall behaviour. This delicate interplay is central to everything from chemical synthesis to biological energy cycles and environmental transformations.

Enthalpy and entropy also shape the way scientists understand equilibrium. At equilibrium, the system exists at a point where the competing influences of enthalpy and entropy reach balance. The system no longer changes because the energetic advantage of moving in one direction is offset by the entropic advantage of moving in the other. This principle explains why some reactions proceed only partially, why mixtures achieve specific ratios, and why certain phase states coexist. The balance between enthalpy and entropy determines whether conditions favour ordered structures or disordered ones, whether energy becomes concentrated or dispersed, and whether matter organizes or spreads.

In everyday life, both enthalpy and entropy play subtle but profound roles. The cooling of a hot beverage as it sits on a table, the melting of ice cream in warm weather, the evaporation of sweat that cools the skin, and the combustion of fuel that powers vehicles all involve enthalpy changes. At the same time, the mixing of aromas in a kitchen, the fading of scents in the open air, the spreading of fog, and the tendency of objects to move toward thermal equilibrium are driven by entropy. These examples illustrate that enthalpy and entropy, though rooted in scientific theory, govern familiar experiences and ubiquitous natural patterns.

Ultimately, comparing enthalpy and entropy reveals two complementary perspectives on energy. Enthalpy describes the heat-related content and transformations within systems, while entropy describes the distribution and dispersal of that energy. One accounts for how much energy flows; the other reveals how energy naturally tends to spread. Enthalpy emphasizes structure, bonding, and thermal transfer; entropy emphasizes freedom, probability, and the direction of change. Together, they form the foundation for understanding why processes happen, how energy moves, and what makes certain transformations possible. They stand as two pillars of thermodynamic insight, shaping not only scientific understanding but also the behavior of the world in every moment.