De Morgan’s Law — Boolean Algebra and Logic Operations

De Morgan’s Law stands as one of the most important conceptual pillars in logic, computer science, mathematics, electrical engineering, and digital circuit design because it captures the deep relationship between negation and the way logical statements combine. Although many people first encounter the law through symbolic expressions, its power does not depend on mathematical formulas but on the intuitive idea that when you negate a compound statement, the meaning of that statement flips in a very structured and predictable way. De Morgan’s Law reveals that logical operations are not isolated tools but interconnected transformations that reflect how truth, falsity, choice, and exclusion work in reasoning. At the most fundamental level, the law explains how the logic of “and” relates to the logic of “or” when a negation is applied. This seemingly simple insight has shaped everything from how computers process decisions to how search systems interpret queries, from how digital circuits perform operations to how programmers write conditionals, from philosophical logic to modern artificial intelligence systems. Understanding De Morgan’s Law conceptually allows one to see the hidden symmetry within logic and appreciate why certain operations can be transformed without changing their underlying meaning.

To grasp the intuitive meaning of De Morgan’s Law, imagine a situation involving choices or conditions. If you have a pair of requirements that must both be satisfied, and you want to negate the entire idea of satisfying both, the negation does not simply say that neither requirement holds; instead, it transforms into a statement that requires at least one of the conditions to fail. Likewise, if you have a situation where at least one of two possibilities is acceptable and you negate the entire idea of accepting either one, the negation becomes a requirement that both possibilities must fail. This reversal reflects the core of De Morgan’s insight: negation changes not only the truth value of a statement but also the structure of how conditions join together. The law highlights the duality between inclusion and exclusion, between cooperation and alternative options, and between conjunction and disjunction. Even without formal notation, one can feel the logic intuitively by thinking about real-world decisions. If someone says, “I will not take both choices,” it means at least one option is being rejected, whereas “I will not take either choice” means both are rejected. This subtle but crucial distinction is exactly what De Morgan’s Law codifies.

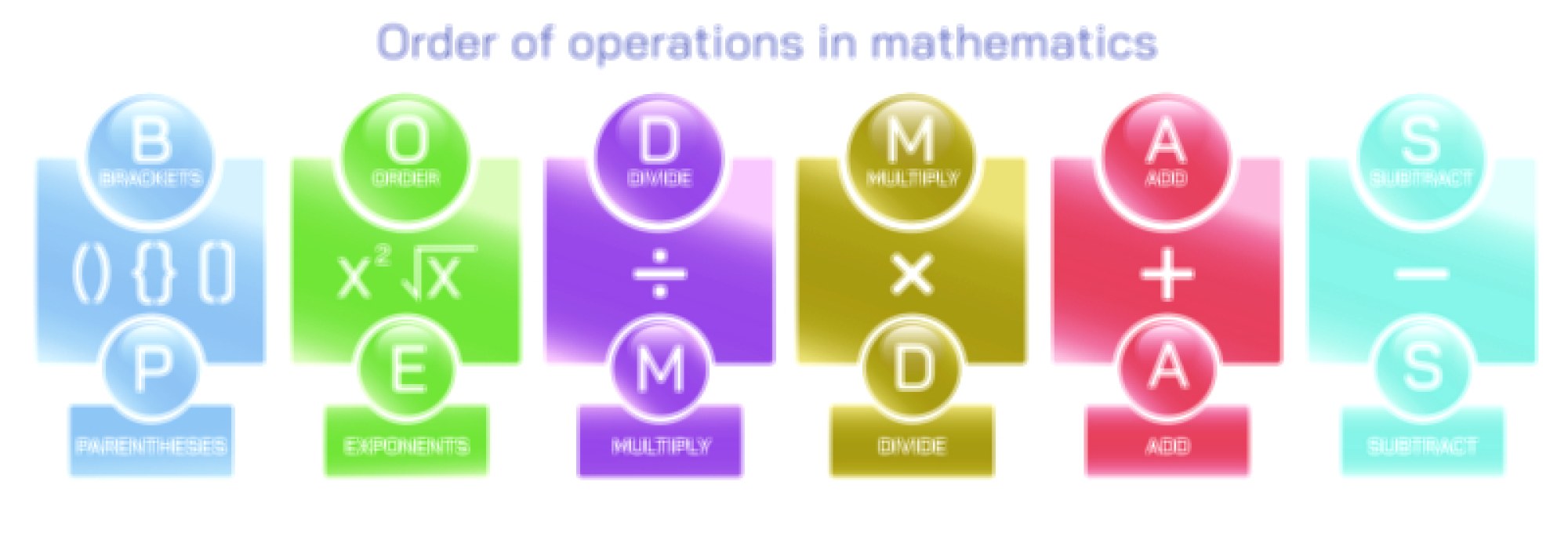

In Boolean algebra, where logical values are treated as algebraic variables manipulated through operations that mirror the structure of reasoning, De Morgan’s Law provides a method to rewrite expressions into equivalent forms that may be easier to compute, simplify, or implement in circuits. Boolean algebra uses operations that correspond to “and,” “or,” and “not,” and these operations form the basis of truth tables, logic gates, digital processors, and decision algorithms. When Boolean expressions become complex, they often include nested combinations of these operations. De Morgan’s Law allows such expressions to be transformed in ways that maintain their meaning but change their structure. This transformation is essential for optimization, because alternate forms of a logical expression may require fewer operations, simpler circuitry, or more efficient processing. The law shows that the world of logic is not linear; it is a web of interrelated structures that can be seamlessly converted into one another while preserving truthfulness.

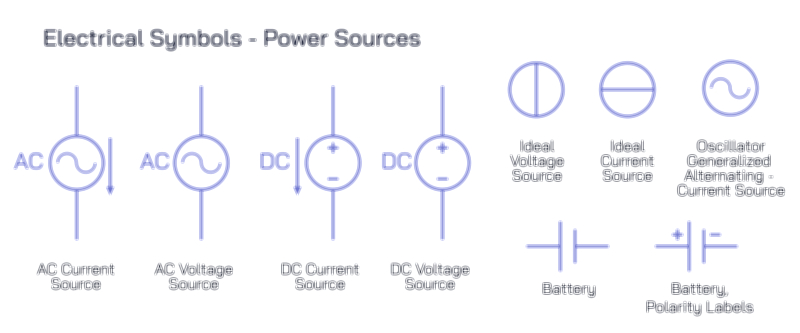

In digital electronics, De Morgan’s Law plays an especially critical role. Digital circuits rely on gates that perform logical operations. The “and” gate outputs a true signal only when all its inputs are true, while the “or” gate outputs true when at least one input is true, and the “not” gate flips the input’s value. In practice, hardware designers often work with only certain types of gates, particularly “nand” and “nor” gates, because they are easier to build physically and more reliable in electrical systems. The power of De Morgan’s Law is that it enables any logical operation, no matter how complex, to be reconstructed entirely from “nand” gates or entirely from “nor” gates. This is possible because the law expresses how negation interacts with “and” and “or,” allowing those operations to be redefined in terms of each other. Without this conceptual transformation, the modern computer architecture built upon layers of simple logic elements would be far more complicated. De Morgan’s Law serves as the mathematical backbone that allows digital logic to operate within practical hardware constraints.

In software development, De Morgan’s Law is equally influential because programmers constantly work with conditional statements. Whether in a simple “if” statement, a complex search algorithm, or logical filtering operation, conditions often combine through “and” or “or” relationships, and developers frequently apply negation to entire decision structures. When programmers rewrite conditions for clarity, for performance, or to avoid logical errors, De Morgan’s Law ensures that the transformed form retains the exact same meaning. It prevents mistakes that might arise from misunderstandings about how negation interacts with compound decisions. For instance, negating a condition that requires multiple criteria to be met must be handled carefully; otherwise, the resulting condition may accidentally reverse more than intended. De Morgan’s Law acts as a conceptual safeguard, helping programmers avoid subtle bugs that could cause software to behave unpredictably or incorrectly. In highly complex systems such as operating systems, databases, networking protocols, and embedded devices, this reliability is crucial.

From the perspective of formal logic and philosophy, De Morgan’s contribution highlights how negation functions as a transformative operator on thought structures. Logical reasoning often involves combining premises, negating statements, and exploring consequences. Philosophers analyzing the structure of arguments use De Morgan’s Law to understand how composite ideas behave under denial. Consider a statement like “It is not the case that both conditions are true.” This is logically equivalent to “At least one condition is false.” The transformation preserves meaning while shifting the perspective. Philosophers concerned with the foundations of logic, semantics, and language find De Morgan’s Law indispensable because it reveals coherence and symmetry within thought processes that might otherwise appear arbitrary. The law ensures that logical translations remain faithful and that negating complex propositions produces results consistent with our intuitive understanding of truth and falsehood.

In artificial intelligence, machine learning, and computational logic, De Morgan’s Law underpins reasoning engines, search algorithms, inference systems, and pattern recognition approaches that depend on Boolean structures. Many decision systems operate by pruning or expanding search trees based on logical conditions. The ability to rewrite or restructure logical expressions allows AI systems to explore constraints more efficiently. In expert systems or symbolic reasoning engines, logical rules must sometimes be inverted, generalized, or simplified in order to determine feasible outcomes or identify contradictions. De Morgan’s Law ensures that these transformations remain logically equivalent to their originals, preserving accuracy in automated reasoning. Even in neural networks, where explicit logical statements are not always present, underlying activation patterns often reflect logical relationships that must be understood, particularly when networks are used to emulate reasoning processes or interpret symbolic data.

Search engines and filtering systems also rely on the conceptual power of De Morgan’s Law. When users enter search queries that include multiple conditions, the system must interpret how those conditions interact. Negations, exclusions, and combinations of keywords all depend on Boolean logic. Understanding how “not” interacts with “and” and “or” ensures that search interfaces perform correctly. Content filters, database queries, access control systems, and sorting algorithms all depend on correct interpretation of logical relationships. De Morgan’s Law allows these systems to restructure complex conditions internally, improving efficiency across large data sets. Without the clarity offered by these logical transformations, many of the digital tools we rely on daily would struggle to interpret user intentions or to manage massive information flows.

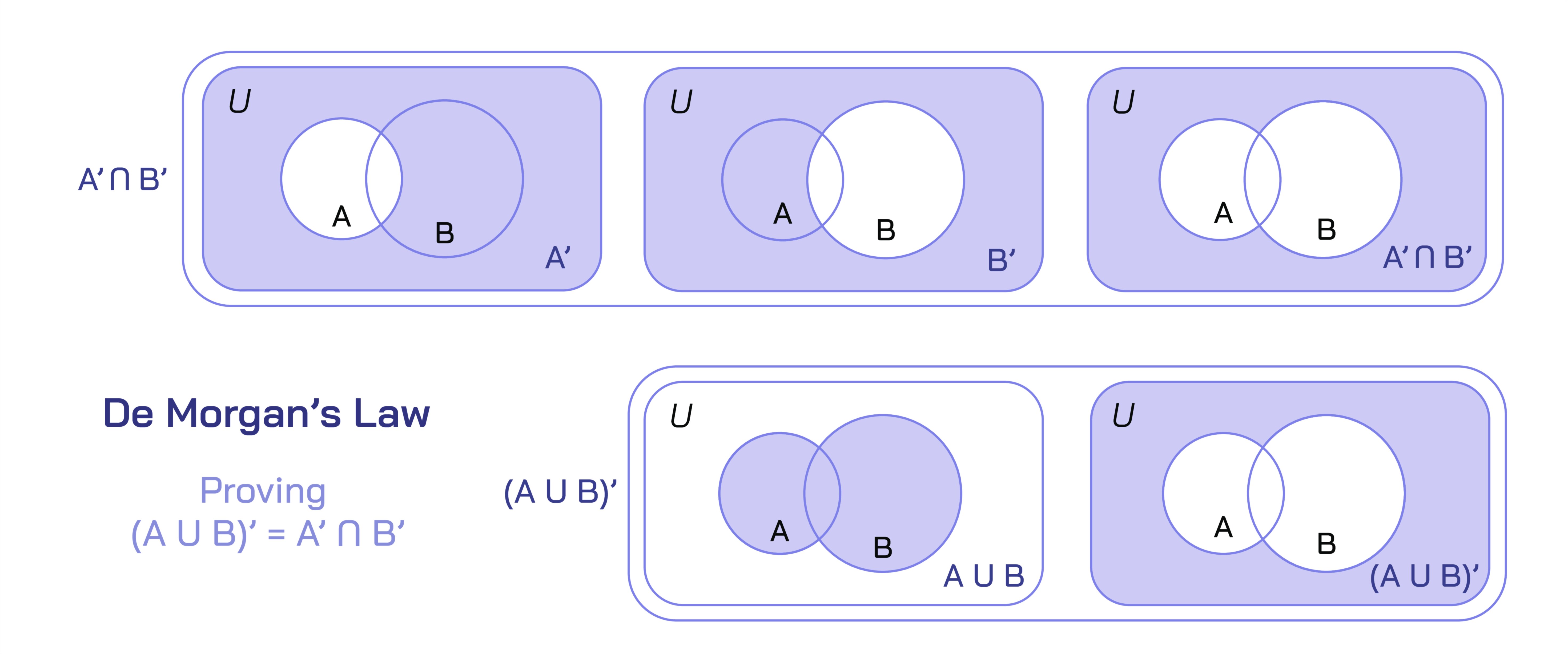

In mathematics, De Morgan’s Law plays a major role not only in Boolean algebra but in set theory, because set operations mirror logical operations. The union of sets corresponds to “or,” the intersection corresponds to “and,” and the complement corresponds to negation. In this context, De Morgan’s Law describes how complements interact with union and intersection. Conceptually, this means that negating a requirement for membership in multiple conditions produces a different set depending on how those conditions combine. These set-theoretical interpretations deepen the conceptual reach of De Morgan’s Law by connecting logic with geometry, probability, topology, and abstract algebra. Mathematicians rely on these transformations when proving theorems, designing structures, or analyzing relationships within or between sets. The law provides both a shortcut and a conceptual compass for navigating these transformations.

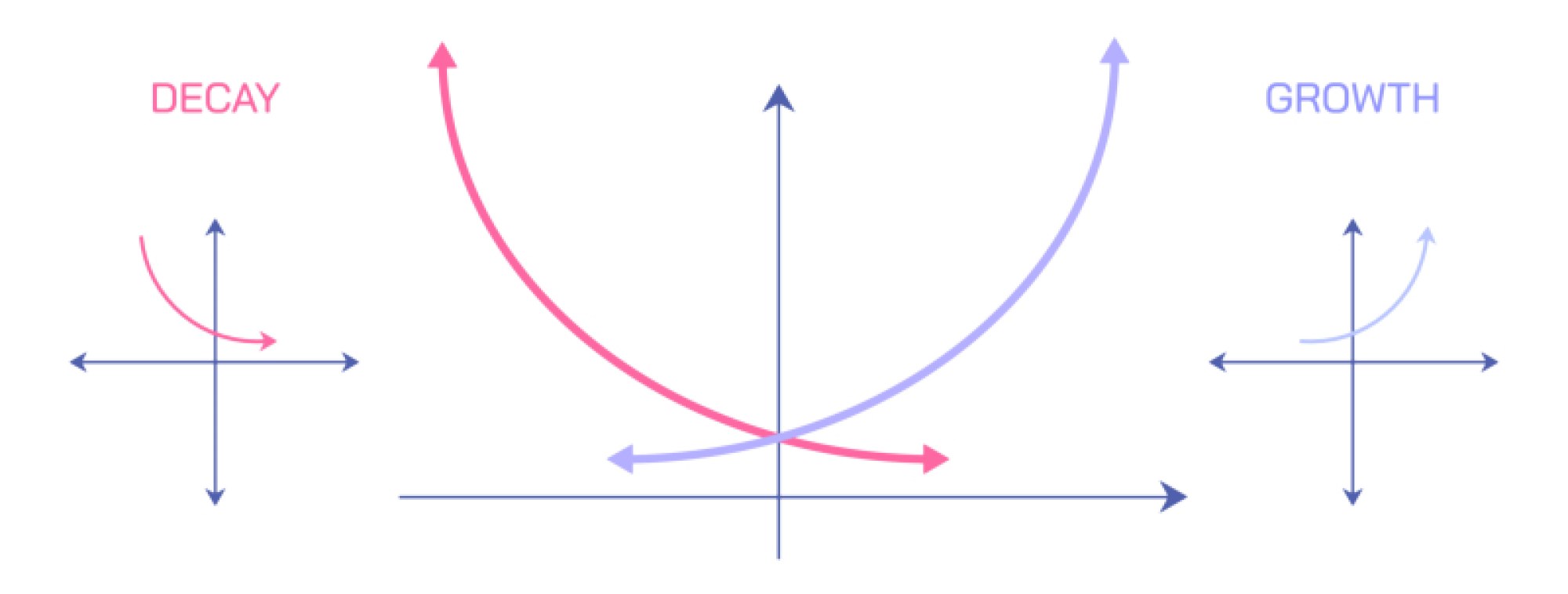

A deeper conceptual insight from De Morgan’s Law is that logical operations possess a duality. The “and” operation and the “or” operation are not fundamentally disconnected; they are mirrored versions of each other when observed through negation. This reveals an internal symmetry within the structure of logic. Each logical construct has a counterpart, and the presence of negation reshapes one operation into its dual. This duality resonates through various fields—mathematics, computing, electronics, and reasoning—demonstrating that logic is built upon intertwined structures that reflect one another. The existence of this duality is one of the reasons why Boolean algebra can be simplified, minimized, and optimized so effectively. It also explains why transformations such as switching all the connectors in a logical expression and negating the components lead to meaningful, equivalent expressions.

De Morgan’s Law also teaches an important lesson about the precision of language. Everyday conversation often mixes and confuses “and,” “or,” and “not,” but logical reasoning demands strict distinctions. Statements that appear similar in natural language may differ significantly in logical meaning. By clearly defining how negation interacts with compound statements, De Morgan’s Law eliminates ambiguity and helps people express ideas with accuracy. This clarity improves communication in technical writing, legal arguments, policy analysis, scientific modeling, and educational contexts where logical precision matters. Understanding the law enhances critical thinking by forcing one to examine how assumptions and conclusions depend on the structure of statements, not just their components.

Ultimately, De Morgan’s Law is far more than a rule for manipulating symbols. It is a conceptual lens through which one can view the structure of reasoning itself. It shows that logical systems have internal harmony, that transformations preserve meaning across different forms, and that negation reshapes but does not destroy logical relationships. Whether in digital circuits, formal proofs, computer code, linguistic analysis, or philosophical reasoning, the law provides a foundation for understanding how ideas combine and how their opposites behave. Its power lies in its universality and its ability to reveal order in areas where complexity might otherwise obscure the underlying logic. Through De Morgan’s Law, we gain a deeper appreciation for the elegance of logic and the coherence that binds together the systems of thought that shape modern science, technology, and reasoning.